Faculty Checklist for Secure Online Test Monitoring

Remote assessment is now routine across higher education, certification, and corporate learning. Yet stakes rise when online test monitoring drives decisions about grades, credentials, and careers.

Faculty therefore need a clear, research-informed playbook to protect integrity while respecting privacy and accessibility.

This article distills the latest market data, legal signals, and expert advice into an actionable checklist. Use it to configure every remotely proctored exam with confidence, transparency, and student support.

Global spending on proctoring software already exceeds US$800 million and analysts forecast near-double growth by 2029. Meanwhile, court decisions such as Ogletree v. Cleveland State caution campuses against intrusive room scans.

You must steer between opportunity and risk. Let’s dive in and build a proctoring plan that stands up to legal scrutiny and academic review.

Throughout, the term online test monitoring appears in context to reinforce search relevance without sacrificing clarity. Let’s dive in and build a proctoring plan that stands up to legal scrutiny and academic review.

Remote Proctoring Market Trends

Industry analysts project the global market to reach nearly US$2 billion by 2029, with 10-17% compound growth. Advanced AI, cloud delivery, and flexible licensing make online test monitoring attractive for universities and certification boards. Educational institutions remain the dominant customer segment, though corporate L&D programs are expanding usage. However, many campuses negotiate shorter contracts and broaden alternatives to control risk. Institutions monitor search trends and see online test monitoring mentioned in most ed-tech RFPs.

Growth continues, but scrutiny grows even faster. Next, we examine the legal context shaping configuration choices.

Legal And Privacy Shifts

The 2022 Ogletree ruling declared mandatory room scans an unreasonable search under the Fourth Amendment. Consequently, several universities disabled that feature or require explicit student consent. Privacy advocates, ACLU among them, demand limits on biometric processing and long data retention. FERPA adds another compliance layer, mandating clear access controls and timely deletion. Meanwhile, accessibility offices warn of algorithmic bias and false flags against disabled students. Sound policies turn online test monitoring from liability into trusted practice.

Legal and civil-rights pressures are real and rising. Therefore, faculty must configure each remotely proctored exam with precision, as the next checklist shows.

Faculty Setup Checklist Guide

Begin planning weeks before the test window opens. The following steps align with top campus guides and vendor best practice.

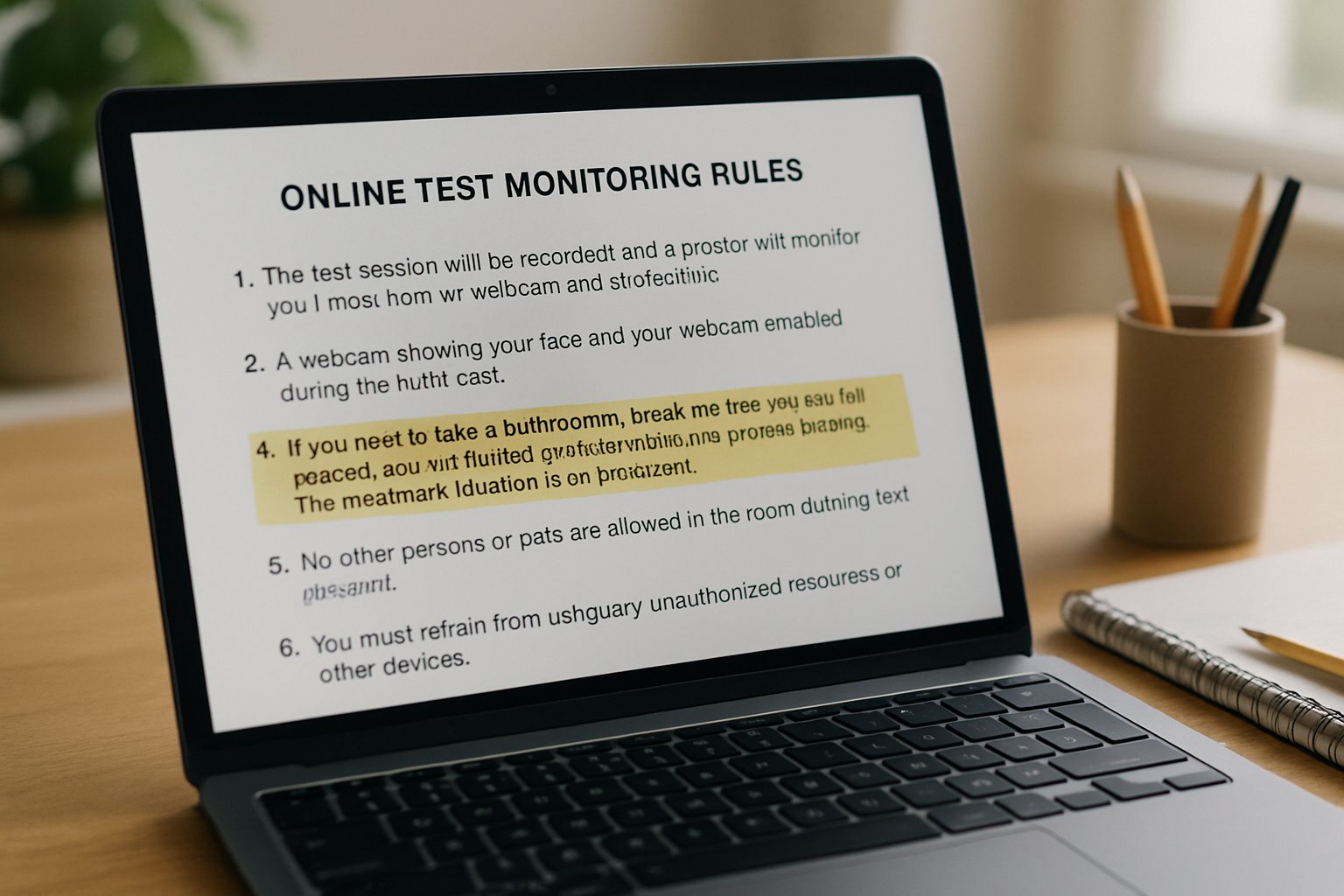

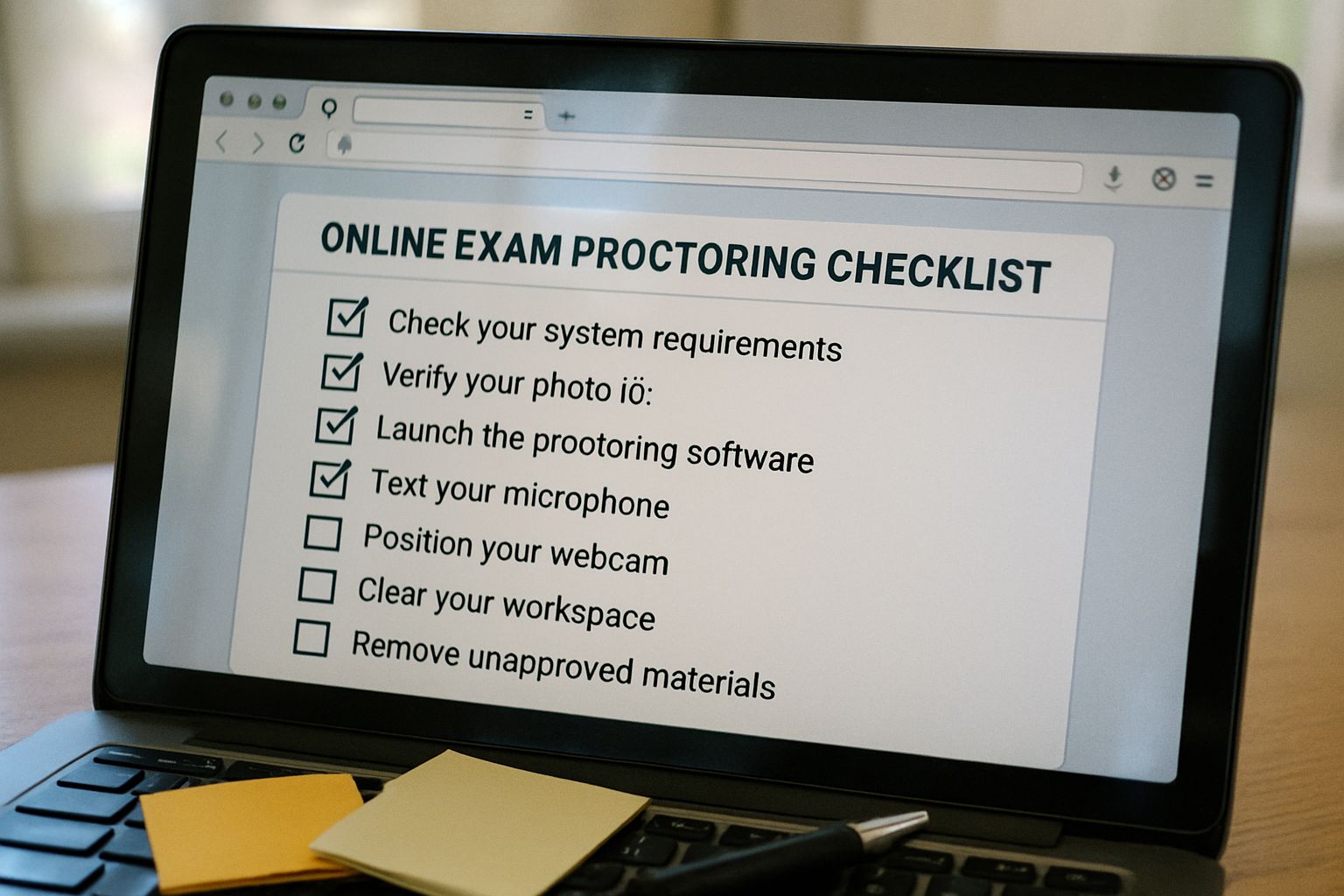

One Page Quick List

- Post a detailed syllabus notice covering tool, data use, fees, and accommodation contacts.

- Create an ungraded practice quiz to test cameras, microphones, and lockdown settings.

- Coordinate with Disability Services to confirm extended time and alternative workflows.

- Disable invasive room scans unless legal counsel grants written approval.

- Randomize questions and apply reasonable time limits tied to cognitive difficulty.

- Publish a clear appeals process and a two-step flag review protocol.

Together, these items build transparency and reduce disputes. Effective online test monitoring depends on that groundwork. Now, let’s move to operational tips for exam day.

Exam Day Execution Tips

Remind students 48 hours ahead to retest equipment and internet stability. Ask them to start early to avoid authentication eating into response time. Furthermore, keep the launch window narrow yet flexible to respect global time zones. During the session, online test monitoring tools log every action for later analysis. Select one consistent proctoring mode to prevent software conflicts. Meanwhile, have IT or vendor chat standing by for live triage. A calm student starts a remotely proctored exam with clearer focus and fewer technical surprises.

Smooth operations lower student stress and flag volume. Subsequently, attention shifts to reviewing what the system records.

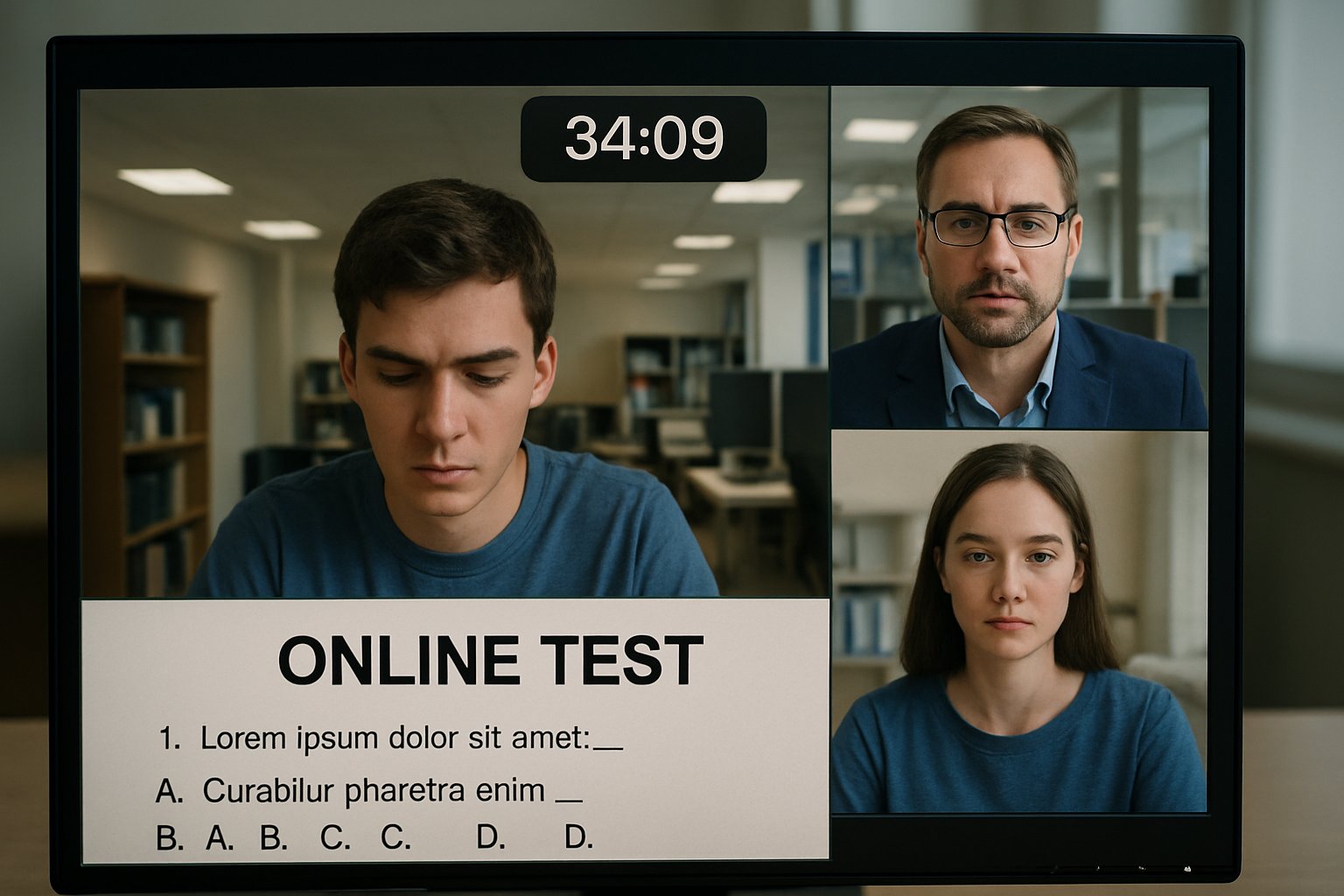

Post Exam Review Protocols

Never treat automated alerts as definitive proof. Online test monitoring generates many data points that require human interpretation. First, instructors examine flagged segments alongside metadata such as eye-gaze charts. Second, they interview the student before pursuing discipline. Consequently, false positives get cleared while true violations proceed through formal policy. Store recordings only as long as retention rules allow, then delete securely.

A documented workflow protects fairness and privacy. Before closing, consider broader risk-mitigation tactics.

Key Risk Mitigation Tactics

Start with an honest needs analysis; some courses thrive with open-book alternatives instead. Moreover, offer an on-campus option for students lacking private space or stable bandwidth. Use moderate flag sensitivity to reduce bias against darker skin tones or neurodivergent behaviors. When configured wisely, online test monitoring supports equity rather than threatens it. Finally, run periodic vendor audits covering security, uptime, and data handling.

Proactive safeguards keep reputation intact. We can now summarize the full strategy.

Across the full cycle—planning, delivery, and review—you now have a repeatable blueprint. Follow it and online test monitoring becomes a transparent, defensible part of your assessment toolkit. Consistent preparation, conservative settings, and fair review neutralize most technical, legal, and equity risks. Choosing a reliable partner makes every remotely proctored exam smoother for faculty and students.

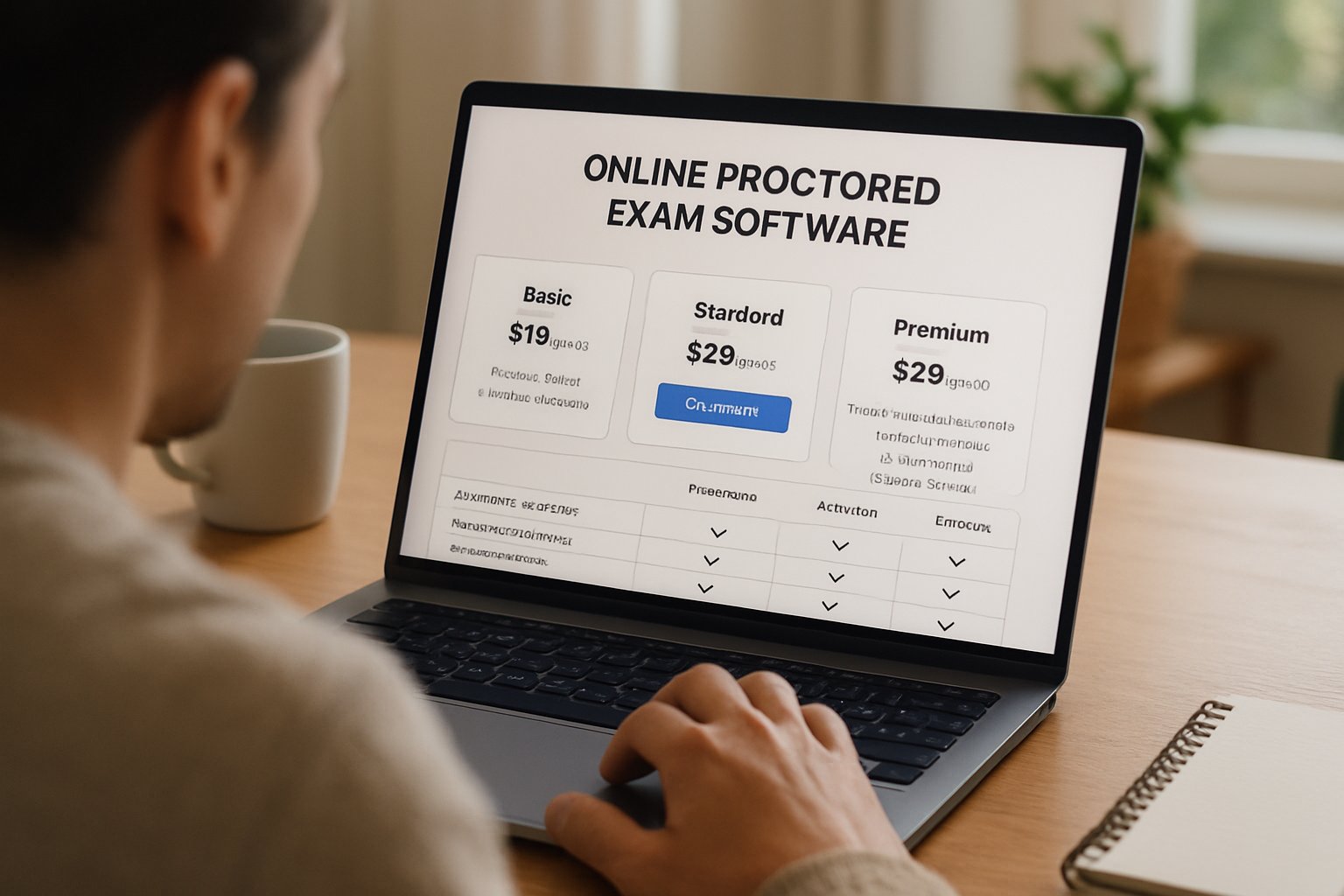

Why Proctor365? Our AI-powered proctoring engine pairs advanced identity verification with scalable exam monitoring trusted by global exam bodies. The platform delivers real-time analytics, low-latency video, and robust data protection. Setup typically completes in minutes and integrates smoothly with major LMS platforms. Therefore, your staff stay focused on teaching while integrity stays intact. Experience streamlined online test monitoring today by visiting Proctor365.

Frequently Asked Questions

- What is online test monitoring and how does it ensure exam integrity?

Online test monitoring employs AI and real-time analytics to secure exam integrity. It uses robust identity verification and fraud prevention measures, ensuring transparent and fair assessments while upholding high academic standards. - What best practices help configure a secure online proctored exam?

Best practices include detailed pre-exam notices, ungraded system checks, disabling intrusive scans, and clear appeal protocols. These measures leverage AI proctoring and identity verification to ensure compliance and reduce false flags. - How does Proctor365 address legal concerns and privacy in remote proctoring?

Proctor365 follows strict legal and privacy standards by employing secure data handling, limited recording retention, and non-intrusive identity checks. This ensures compliance with regulations while minimizing student privacy intrusions. - How does AI proctoring by Proctor365 enhance exam security and fairness?

Proctor365’s AI proctoring offers real-time analytics, secure identity verification, and advanced fraud prevention. These features ensure unbiased exam monitoring, reduce technical risks, and create a secure, efficient testing environment for all.