Remote Exam Proctoring Guide: Beginner Tips & Best Practices

Remote exam proctoring exploded after 2020, yet many teams still feel unsure about the basics. This guide breaks down how remote exam proctoring works, why institutions deploy it, and how beginners can start safely.

Why Proctoring Matters Today

Academic integrity faces new threats in fully online settings. Consequently, universities, ed-tech firms, and corporate L&D teams seek reliable ways to prevent cheating in online exams. Remote exam proctoring delivers real-time or recorded oversight, helping organizations satisfy accreditation and employer demands.

Market analysts estimate the global segment at several billion dollars, although figures vary. Nevertheless, adoption trends prove clear: remote proctored exams now dominate high-stakes distance assessments across North America and beyond.

Key takeaway: Integrity risks rise online; therefore, oversight tools become essential. The next section explains the available models.

Key Proctoring Models Explained

Organizations can choose from four primary approaches.

Live human monitoring. A proctor watches each candidate through webcam and audio. This option suits critical certifications but costs more.

Record and review. Sessions get captured, then checked later. Consequently, staff can audit incidents on their own schedule.

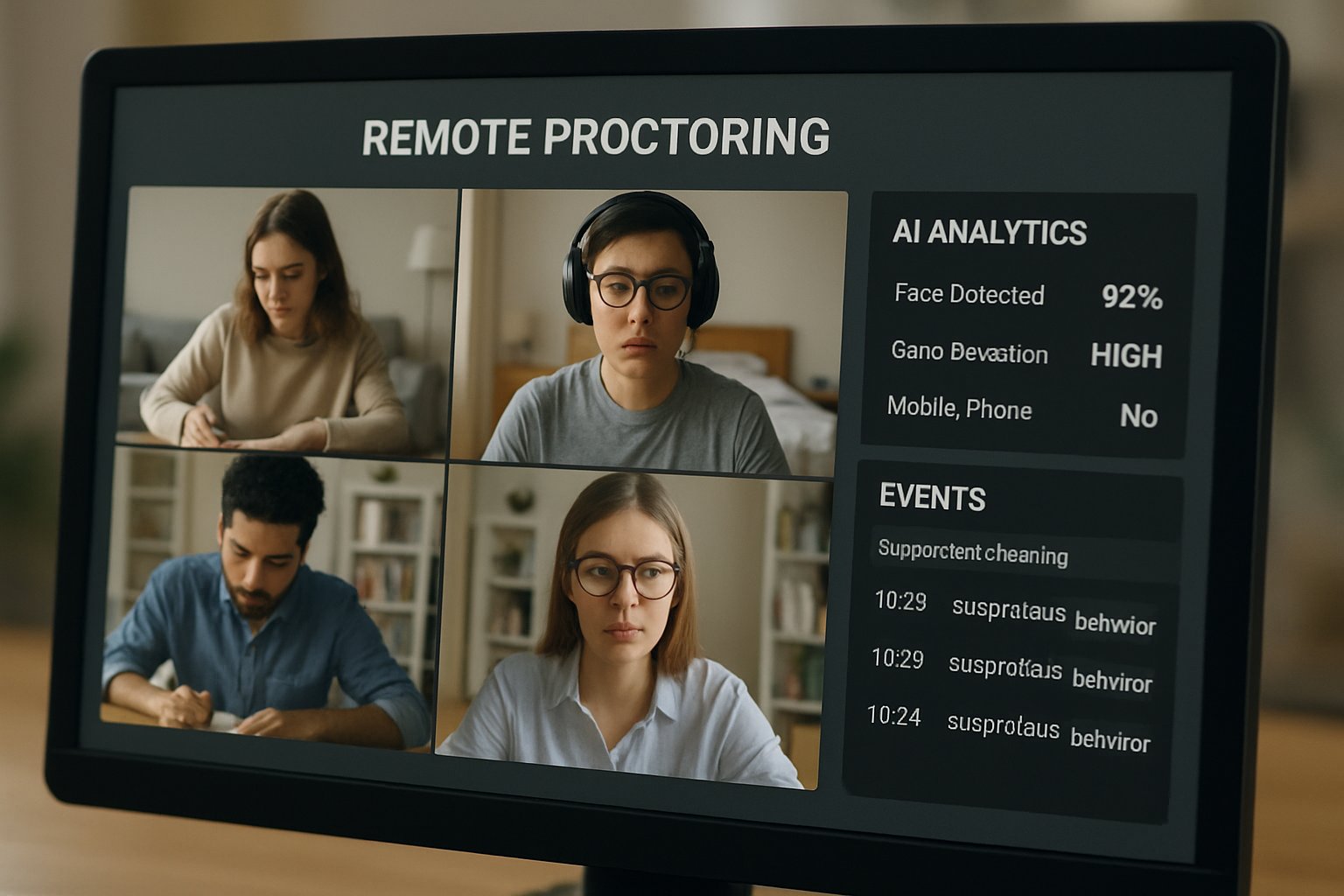

Automated AI flagging. Algorithms scan eye movement, background sounds, or extra faces. However, bias and false positives require careful human confirmation.

Hybrid workflows. Many platforms combine AI with human eyes, balancing scale and accuracy.

Key takeaway: Each model trades cost for immediacy and human judgment. Next, we examine the underlying technologies.

Core Proctoring Technology Stack

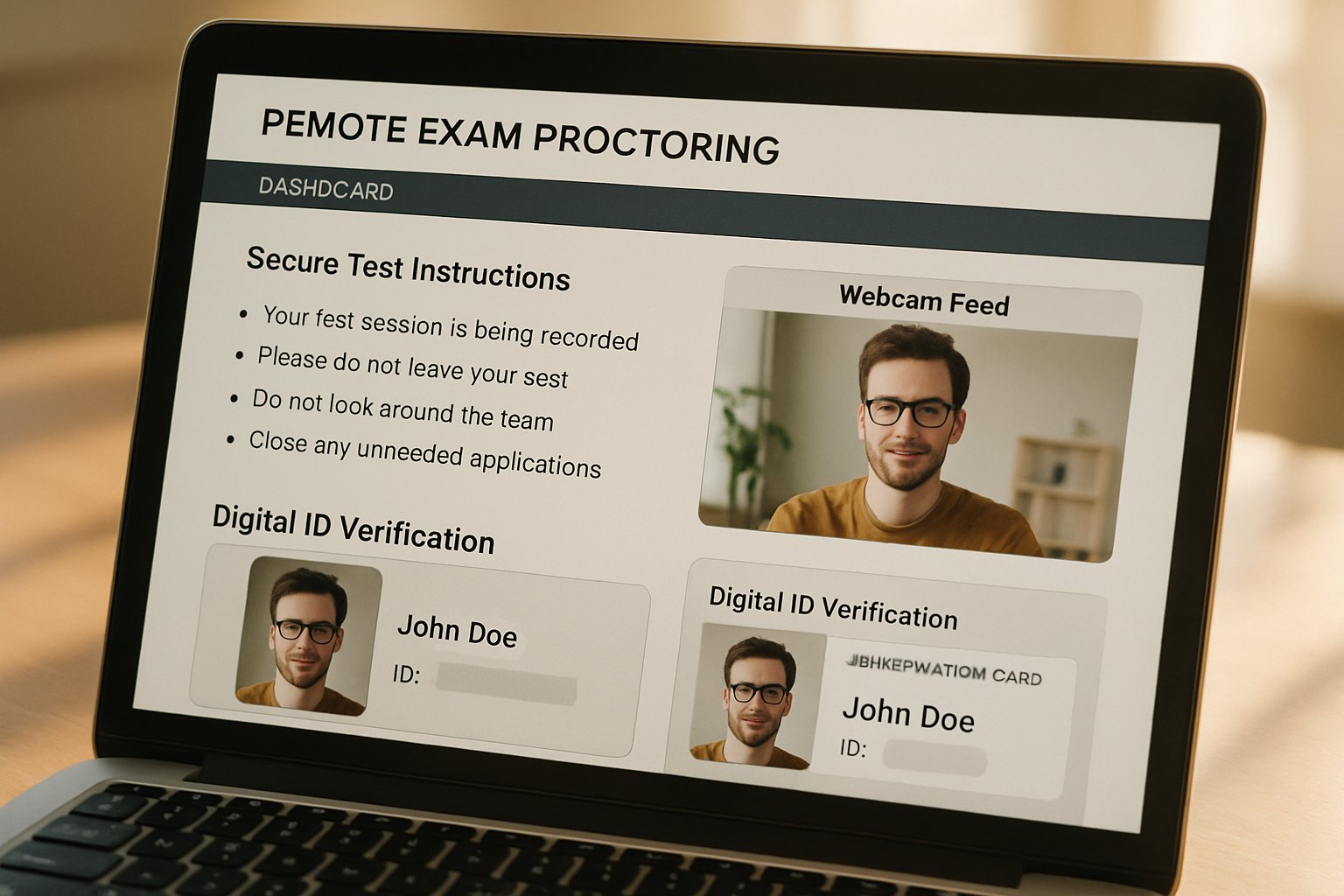

Identity Verification Methods Explained

First, systems verify who is testing. Common methods include photo ID matching, facial recognition, and biometric authentication for exams. Moreover, some vendors add voice or typing patterns to strengthen confidence.

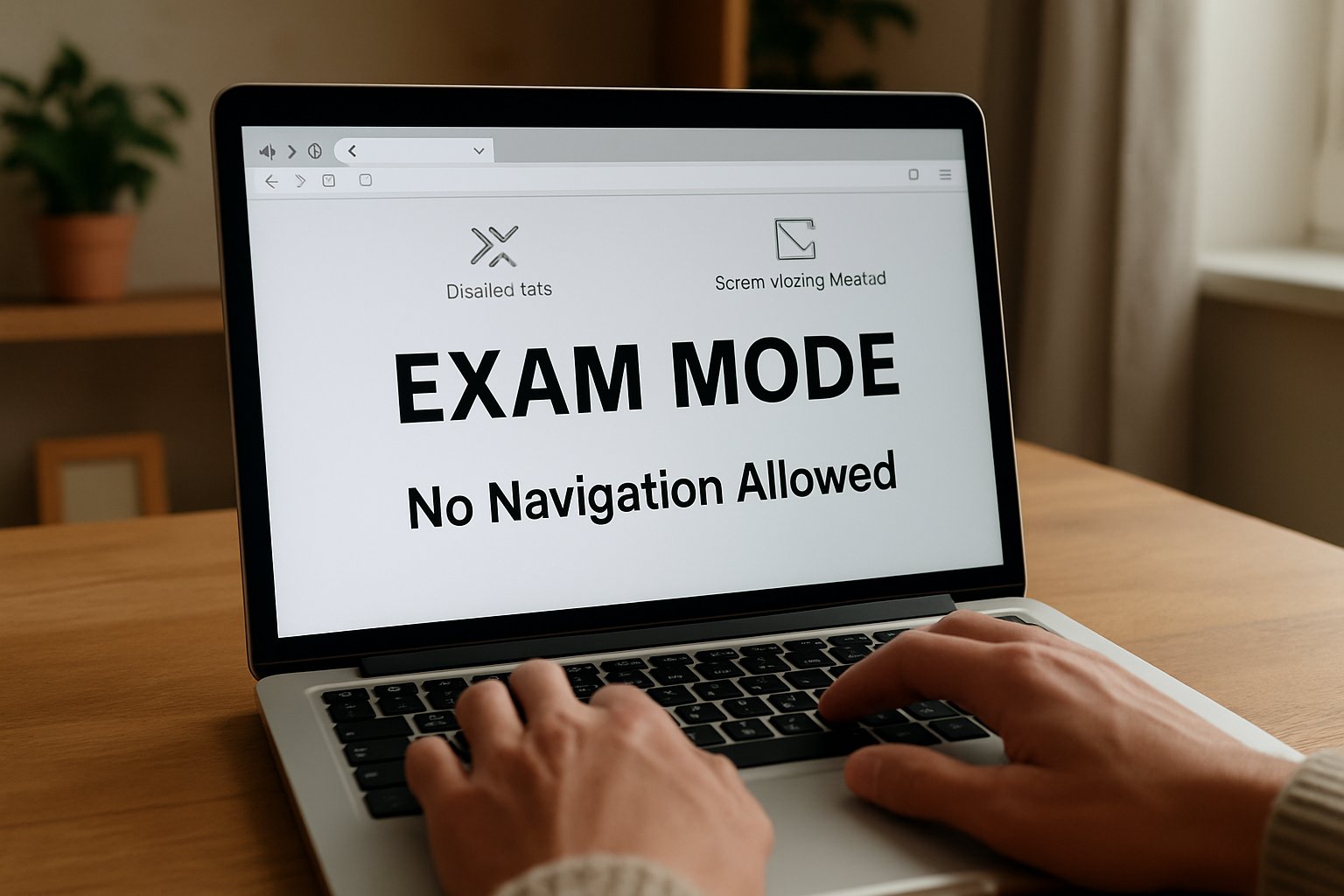

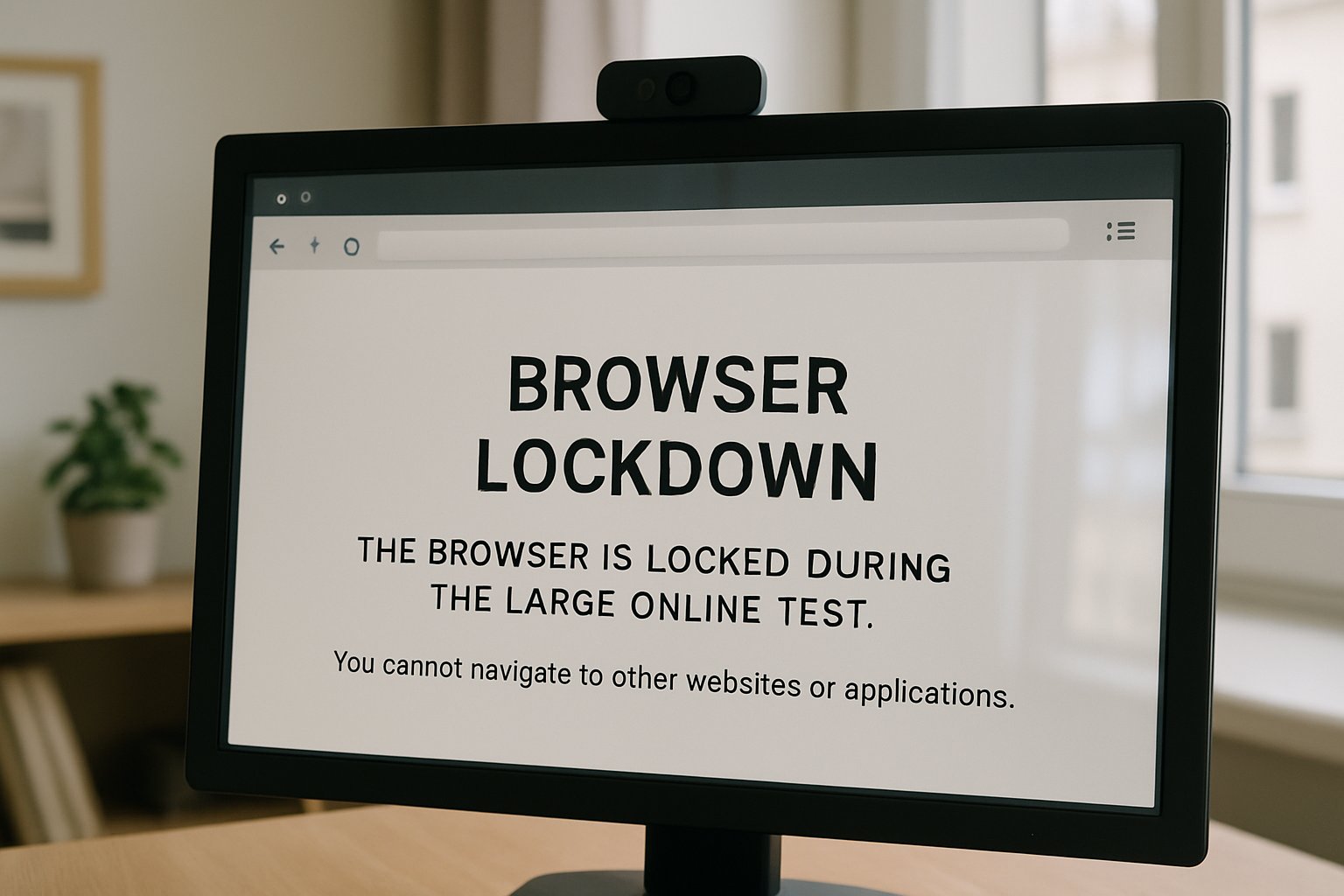

Secure Browser Control Features

Second, secure browsers restrict system functions. They block new tabs, screen captures, and printing. Additionally, they log every keystroke and click for later review.

Other components include:

- 360° room scans to detect hidden materials.

- Screen recording and watermarking for fraud prevention in online exams.

- Live chat support for technical rescue.

Key takeaway: Layered controls build a defensible audit trail. The coming section weighs the pros and cons.

Benefits And Drawbacks Compared

Top Reported Benefits Today

Remote exam proctoring deters casual misconduct. Furthermore, it supports anytime testing, which improves candidate convenience. Scalable AI tools also reduce staffing hours. Finally, rich footage helps investigators decide disputed grades.

Major Reported Concerns Raised

However, privacy advocates warn about intrusive surveillance. Automated bias can unfairly target darker skin tones. In contrast, students without stable broadband struggle to comply. Technical outages may void completed attempts, adding stress.

Key takeaway: Benefits exist, yet ethical and legal duties remain heavy. Next, beginners learn practical setup steps.

Beginner Setup Checklist Steps

Follow these actions before any remote proctored exams:

- Read the test rules thoroughly. Print allowed resource lists.

- Run hardware checks 48 hours ahead. Use wired internet when possible.

- Position lighting so facial features remain clear. Meanwhile, remove reflective objects.

- Prepare official ID and practice the verification flow on your chosen Remote Exam Proctoring Software.

- Alert roommates about quiet periods. Additionally, plan restroom breaks under policy limits.

If a failure occurs, capture screenshots, contact vendor chat, and inform the instructor. Consequently, evidence speeds any appeals.

Key takeaway: Preparation reduces stress and false flags. The final section guides institutional decision makers.

Institutional Action Guide Forward

Decision teams should apply a necessity test. Reserve surveillance for assessments where cheating risk outweighs privacy costs. Moreover, request bias audit reports from any shortlisted proctoring software.

Next, mandate data retention limits and deletion rights. Consequently, legal exposure drops. Provide alternative formats for students lacking hardware or those needing accommodations. Additionally, pilot small cohorts first, then track appeal rates.

Key takeaway: Careful governance protects both integrity and student trust. We now conclude with strategic recommendations.

Conclusion

Effective remote exam proctoring balances deterrence, ethics, and accessibility. Institutions should blend secure browsers, identity checks, and human review while limiting data use. They must also revise assessments and invest in cheating prevention software that respects diverse users.

Why Proctor365? Proctor365 delivers AI-powered remote exam proctoring, advanced biometric verification, and elastic cloud scaling. Trusted by global exam bodies, our platform blocks leaks, supports hybrid oversight, and simplifies compliance. Discover how Proctor365 strengthens exam integrity today.

Frequently Asked Questions

- How does remote exam proctoring ensure exam integrity?

Remote exam proctoring uses live monitoring, AI flagging, and post-exam review to deter cheating, support accreditation, and maintain exam integrity by blending automated processes with human oversight. - What are the core technologies behind efficient proctoring?

Core proctoring technologies include secure browsers, biometric identity verification, AI monitoring, and 360° room scans that work together to block unauthorized actions and protect against fraud. - How does Proctor365 enhance online exam security?

Proctor365 employs advanced AI-powered monitoring, biometric verification, and elastic cloud scaling to detect cheating and ensure robust exam integrity by combining technology with human expertise. - What should candidates do to prepare for remote proctored exams?

Candidates should review exam rules, perform hardware and identity verification checks, and set up a secure testing environment to minimize technical issues and reduce false flags.