Ensuring Fairness In A Remotely Proctored Exam Era

Remote learning became the norm overnight.

Consequently, exam security shifted to webcams and algorithms.

This shift created new possibilities and new anxieties.

A remotely proctored exam promises scale, but bias fears persist.

Students report false flags, especially those with darker skin.

Meanwhile, vendors highlight rapid model improvements.

Universities, certification bodies, and corporate L&D teams need clarity.

They must balance integrity, privacy, and equity.

This article examines whether modern AI proctoring still discriminates.

Moreover, we explore how policy and technology can close gaps.

Expect concise data, practical guidance, and next-step checklists.

Let us dive in.

Current Fairness Debate Insights

Researchers, regulators, and students agree bias exists in some proctoring modules.

NIST reported higher false positives for Asian and Black faces across many algorithms.

The 2022 Frontiers study echoed those findings within a university setting.

Deborah Raji warns that high-stakes use remains risky until performance equalizes.

Evidence shows the debate is grounded in measurable disparities.

Consequently, we must ask how fairness appears inside each remotely proctored exam deployment.

Fairness In Remotely Proctored Exam

Fairness requires equal error rates across protected groups.

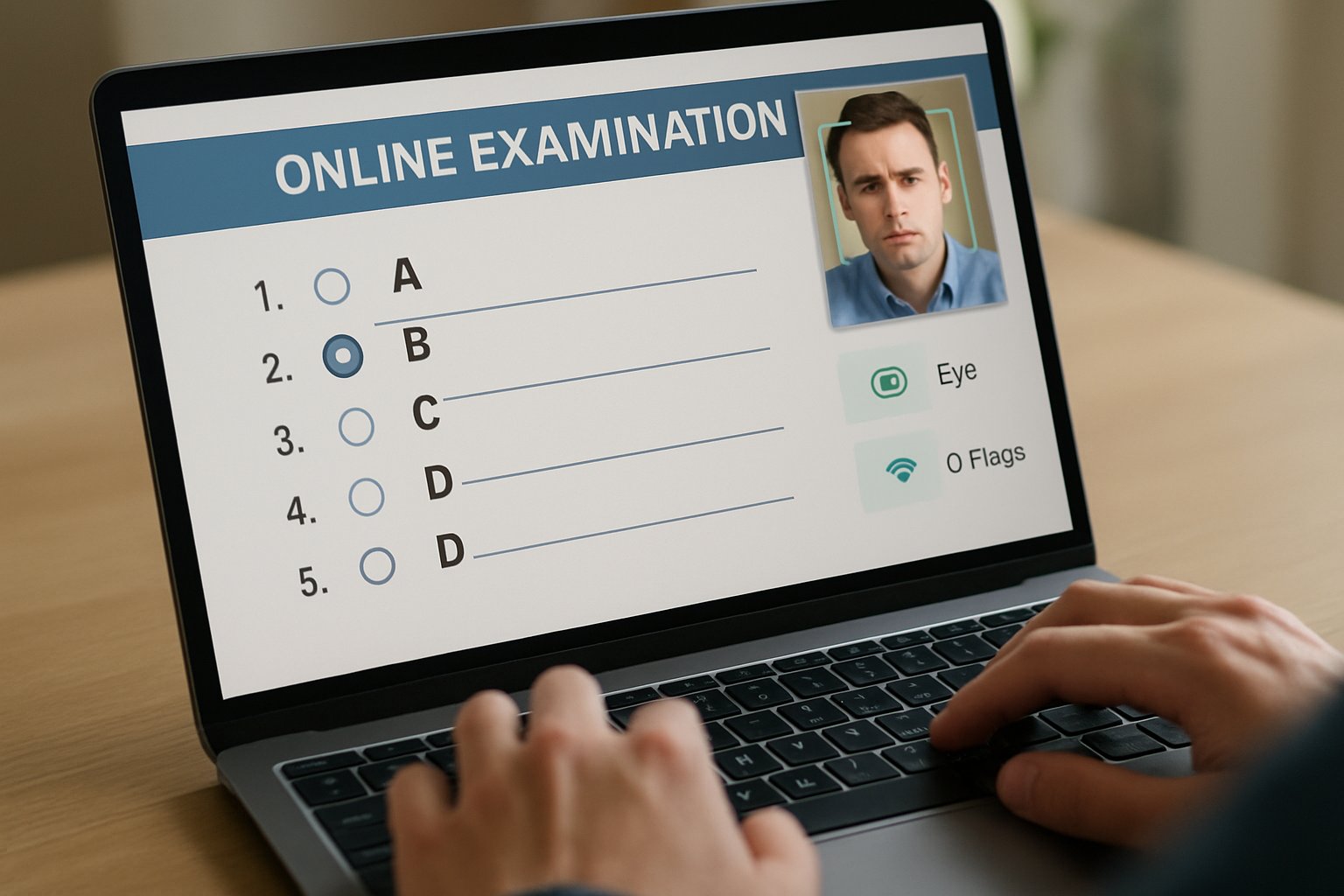

Face detection and recognition errors drive most complaints.

False positives harm honest students by triggering reviews.

Equalized odds metrics help teams measure improvements consistently.

- False Positive Rate: align across skin tones.

- False Negative Rate: avoid access blocks for any group.

- Appeal Overturn Rate: illustrate human review effectiveness.

These metrics turn abstract fairness into trackable numbers.

Next, we review how lawmakers push vendors toward such transparency.

Latest Regulatory Moves Worldwide

The EU AI Act categorizes educational proctoring as high risk.

Therefore, vendors must document testing, oversight, and human control.

From February 2025, biometric emotion detection becomes illegal in Europe.

Meanwhile, U.S. lawsuits like the $6.25M Respondus settlement raise compliance costs.

These moves pressure every remotely proctored exam provider to tighten auditing pipelines.

North American bills may soon demand explicit consent before any ai proctored exam.

Consequently, procurement teams face stricter due-diligence checklists.

Regulators demand proof, not promises.

Accordingly, evidence of bias reductions now matters commercially and legally.

Evidence Of Algorithmic Bias

Numbers speak louder than marketing pages.

NIST tested 189 algorithms and reported up to 100-fold demographic error gaps.

In the university study, dark-skinned women were flagged 3.5 times more often.

Civil groups cite testimonials describing stressful false alarms during an ai proctored exam.

Several students describe being locked out of a remotely proctored exam when detection failed.

Such figures unsettle faculty and students alike.

Nevertheless, best-in-class models show far lower disparity numbers.

Solid data confirms bias but also highlights progress potential.

Therefore, attention shifts to concrete mitigation tactics.

Mitigation Tactics Used Today

Vendors increasingly enforce human review before any disciplinary action.

Additionally, they retrain models on diverse datasets for skin-tone balance.

Some institutions lower sensitivity thresholds to curb false positives.

Others redesign assessments, using open-book formats that reduce cheating incentives.

Moreover, data minimization policies now limit biometric retention windows.

Together, these steps cut error rates and improve perception.

Yet every remotely proctored exam still relies on diligent configuration.

Practical Procurement Checklist Steps

Decision makers need quick, concrete checks.

- Request independent fairness audit results for the ai proctored exam solution.

- Confirm human reviewers analyze all AI flags before accusations.

- Evaluate accommodation policies for disability and bandwidth constraints.

- Inspect data retention schedules and encryption controls.

- Review historical flag overturn rates across each demographic group.

Following this checklist narrows risk quickly.

Subsequently, teams can negotiate evidence-based service-level agreements.

Beyond Surveillance Assessment Design

Fairness also improves when assessments change.

Open-book exams, projects, and oral defenses reduce surveillance needs.

Consequently, student anxiety drops, and integrity remains intact.

Institutions combine such design tweaks with lighter ai proctored exam monitoring.

Results show fewer privacy complaints and equal performance outcomes.

Assessment redesign offers a complementary strategy to technical fixes.

Now, let us recap and introduce a trusted partner.

Bias remains a real, but manageable, threat.

Institutions should measure, audit, and redesign before each remotely proctored exam launch.

They must also demand transparent metrics from every vendor.

Why Proctor365?

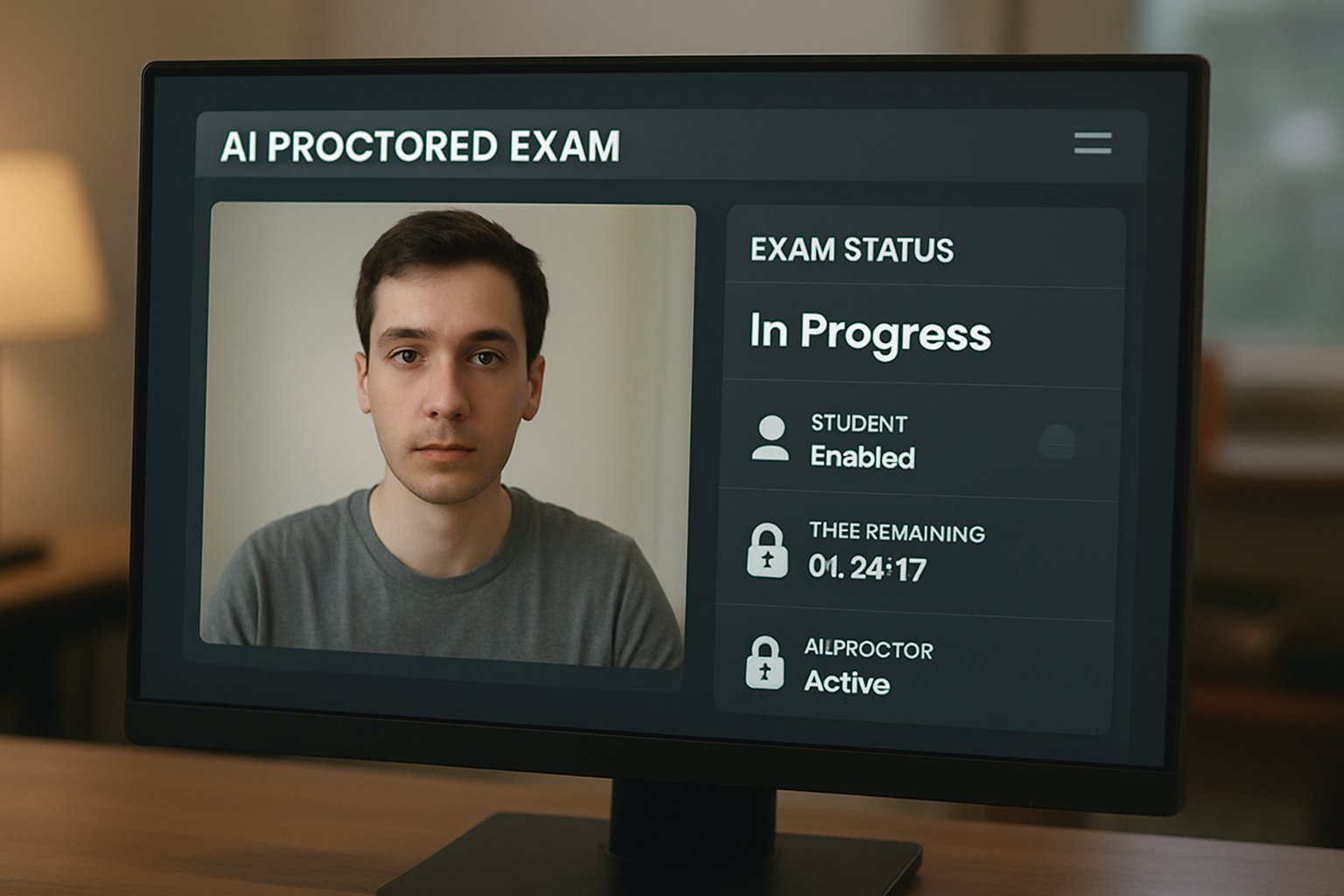

Our platform delivers AI-powered proctoring, advanced identity verification, and scalable monitoring trusted by global exam bodies.

Moreover, human reviewers verify every AI flag to protect fairness.

Consequently, your next remotely proctored exam can achieve integrity without compromising equity.

Explore the full solution at Proctor365.ai.

Frequently Asked Questions

- How does AI proctoring help maintain exam integrity?

AI proctoring uses advanced algorithms and real-time human reviews to quickly detect and deter cheating while ensuring data-driven integrity and fairness across all assessments. - What measures reduce bias in proctoring systems?

Vendors retrain algorithms on diverse datasets, enforce mandatory human reviews, and adhere to equalized odds metrics to reduce bias and ensure fairness in exam security. - What key steps should be followed when procuring a proctoring solution?

Procurement teams should request independent fairness audits, verify human review processes, and assess data retention and encryption protocols to ensure compliance and secure exam integrity. - How does Proctor365 ensure fair AI proctoring?

Proctor365 combines robust AI proctoring, advanced identity verification, and fraud prevention with detailed human oversight to ensure balanced error rates and transparent, fair exam monitoring.