Beyond 2026: The ai proctored exam’s next chapter

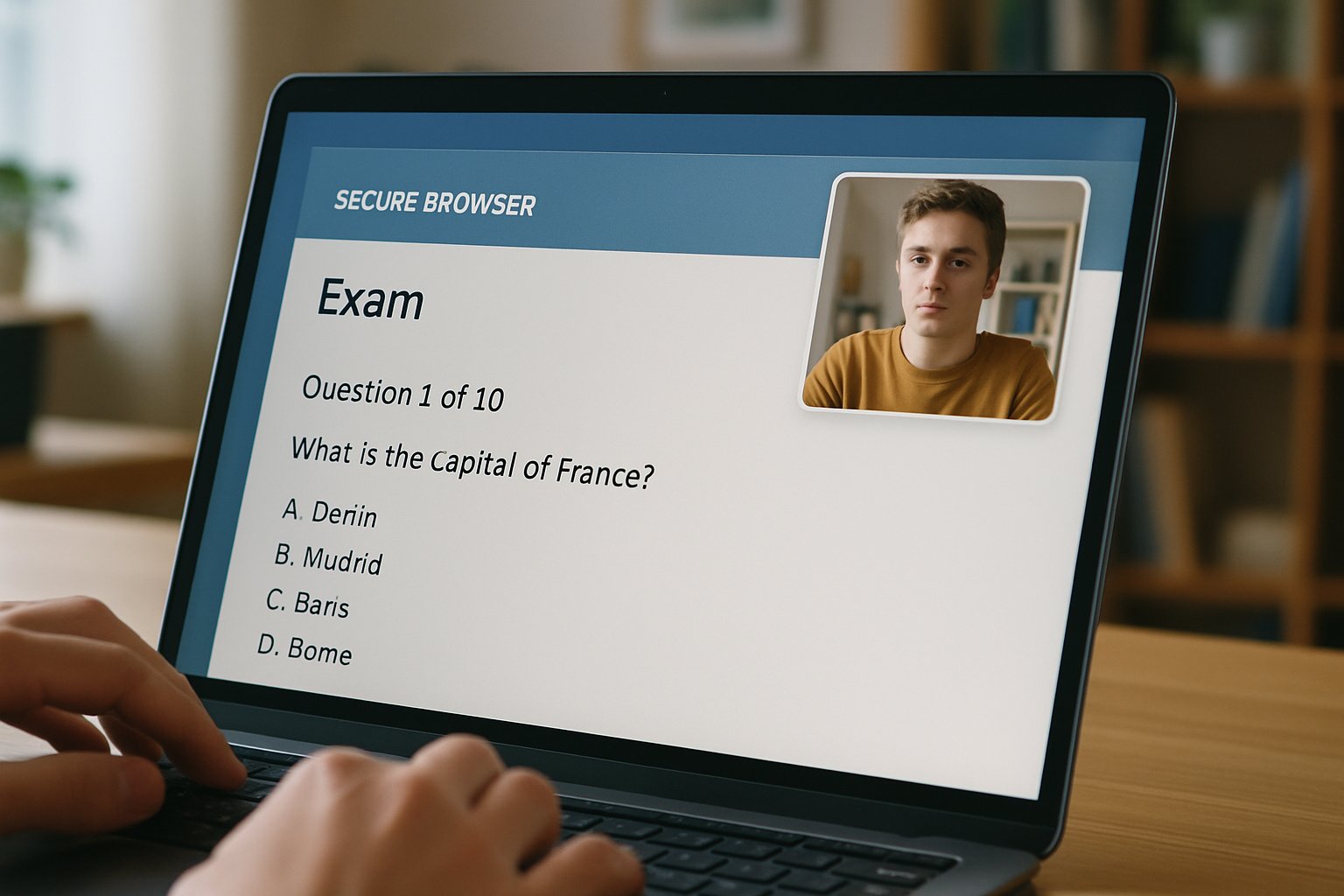

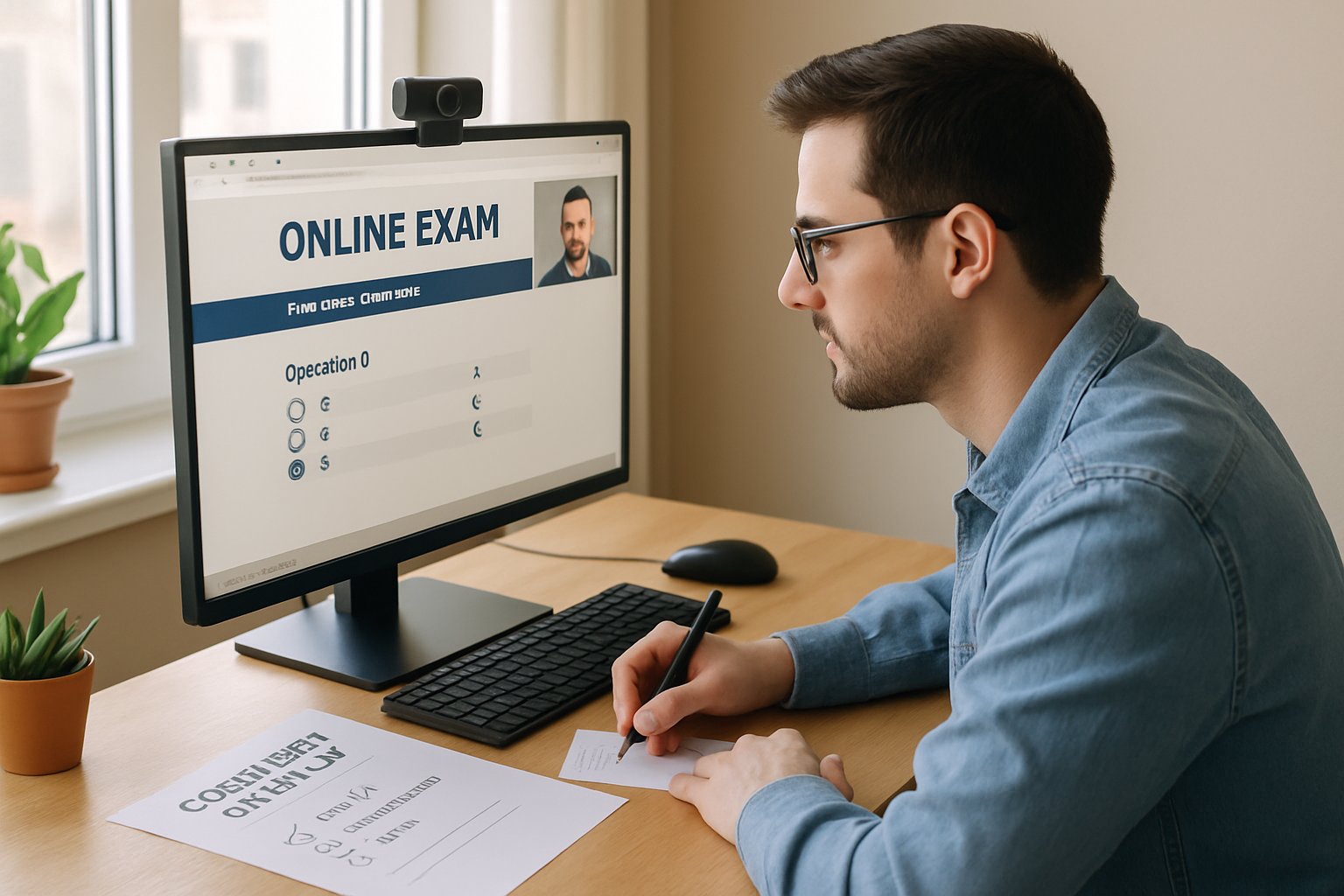

Students no longer sit quietly in crowded halls. Instead, screens, cameras, and algorithms watch every click. However, trust in that vigilancia is under strain. The ai proctored exam now sits at the center of a three-way contest. Regulators press for transparency, educators redesign assessment, and vendors race to improve detection. Moreover, the EU AI Act’s high-risk deadline of 2 August 2026 looms, forcing institutions to rethink strategies quickly. Meanwhile, U.S. state rules add patchwork complexity. Consequently, universities, ed-tech platforms, and corporate L&D teams need a clear roadmap. This article traces developments, market data, technical shifts, and practical actions that will shape remote assessment beyond 2026.

ai proctored exam Landscape

Global adoption keeps climbing, yet figures vary. IndustryResearch estimates the 2026 market at USD 825 million, while other reports cite USD 1.06 billion. Furthermore, surveys claim 60-78 percent of higher-ed institutions now use some form of remotely proctored exam. Despite growth, buyers question reliability and fairness.

Leading vendors—Proctorio, Examity, Honorlock, Respondus, and others—cover most segments. Additionally, emerging specialists push privacy-preserving architectures and behavioral biometrics. Consequently, competition has intensified ahead of the EU deadline. This competitive heat frames every strategic decision.

In short, demand persists, but skepticism rises alongside it. Next, we examine looming compliance pressures.

Compliance Clock Is Ticking

August 2, 2026 is circled on every roadmap. The EU AI Act classifies automated exam monitoring as high-risk, triggering strict risk management, human oversight, and audit duties. Moreover, non-compliance can draw fines up to 7 percent of global turnover. Every ai proctored exam offered to EU learners will be classified high-risk.

Across the Atlantic, state bills in California, Illinois, and New York require algorithmic transparency and biometric consent. Consequently, multinational universities must navigate parallel regimes. Many already request vendor model cards and security attestations.

Regulators will not blink, so institutions must act. Market data, however, offer both caution and opportunity.

Market Numbers Still Diverge

Forecasts differ because analysts track platforms, services, and test-center hybrids differently. Nevertheless, consensus expects double-digit compound growth through 2030. Additionally, behavioral biometric sub-markets show some of the fastest gains, with research citing 95 percent fraud detection in controlled keystroke studies.

Investors still view the ai proctored exam segment as resilient despite lawsuits. Yet, investors watch litigation risk. EPIC and ACLU complaints highlight opaque algorithms and mass data collection. Therefore, boardrooms now weigh revenue potential against regulatory exposure.

Capital remains available, but only for resilient models. Technical innovation becomes the next decisive lens.

Tech Arms Race Intensifies

Vendors stack multiple signals—face, voice, keystroke, and secure-browser telemetry—to cut cheating avenues. However, adversaries answer with deepfake video, synthetic voices, and presentation attacks. Subsequently, researchers publish liveness detection and frequency-domain spoof defenses.

Meanwhile, privacy-first designs emerge. Client-side feature extraction keeps raw biometrics on the test device, reducing data transfer. Consequently, EU buyers increasingly request such architectures. Each ai proctored exam now generates multimodal risk scores.

- Anti-spoofing models detect 90 percent of known deepfakes in lab tests.

- Secure browsers block 25,000+ prohibited app launches during one large remotely proctored exam series.

- On-device processing cuts cloud video storage by 70 percent, easing GDPR risk.

The arms race will continue, but design choices can tame risk. Privacy questions now dominate the conversation.

Privacy Equity Debate Deepens

Several peer-reviewed studies document higher false-positive rates for darker skin tones and disabled students. Moreover, room-scan requirements expose private living spaces, raising dignity concerns. Consequently, student unions demand alternatives or stronger appeal processes.

In contrast, vendors argue that hybrid review models reduce harm because humans verify AI flags. Independent benchmarks, however, remain scarce. Therefore, transparency dashboards and third-party audits are gaining traction.

When a remotely proctored exam flags an innocent candidate, resolution speed matters. Institutions now track appeal times as a key service-level metric.

Equity gaps threaten legitimacy if ignored. Future options extend beyond surveillance alone.

Future Scenario Paths Emerge

Analysts outline three plausible futures. First, a regulated high-road where privacy-first, auditable platforms dominate. Second, an escalating arms race that demands ever more intrusive sensors. Third, a pedagogical pivot toward authentic projects, oral defenses, and selective center-based testing.

Each pathway requires different investments. Furthermore, credential value depends on matching risk level to assessment design. Therefore, strategic foresight now beats reactive spending.

Whatever path wins, leaders need concrete tasks today. The next section lists immediate priorities.

Strategic Action Items Now

Universities and certification bodies can start with a focused checklist.

- Map every ai proctored exam currently scheduled against jurisdictional rules.

- Demand documented model cards, bias tests, and recent security audits from vendors.

- Pilot authentic alternatives alongside every remotely proctored exam.

- Update student policies to include clear appeal timelines and data retention limits.

- Budget for on-device processing upgrades before the EU deadline.

Additionally, corporate L&D teams should align with internal privacy officers and security leads. Consequently, cross-functional governance reduces future surprises.

Acting early saves cost and prevents rushed deployments. We close with final insights and a proven partner.

Conclusion

Remote assessment will not disappear. Instead, the ai proctored exam trend faces sharper scrutiny and smarter design. Leaders who balance compliance, privacy, and pedagogy will defend credential value.

Why Proctor365? Our platform delivers the ai proctored exam experience institutions can trust. We pair advanced identity verification, AI-powered monitoring, and real-time analytics with human oversight. Moreover, our cloud scales effortlessly from small cohorts to global certification campaigns. Top exam bodies worldwide already rely on Proctor365 to safeguard integrity. Visit Proctor365.ai and schedule a demo today.

Frequently Asked Questions

- What are the key challenges facing AI proctored exams?

Key challenges include compliance with regulations like the EU AI Act, addressing privacy concerns, and preventing fraud, with evolving tactics such as deepfakes. Proctor365 addresses these with advanced AI monitoring and identity verification. - How does Proctor365 ensure exam integrity and compliance?

Proctor365 combines AI-powered monitoring with human oversight, on-device processing, and secure biometric verification. This ensures robust fraud prevention and meets strict regulatory requirements for exam integrity. - How are educational institutions preparing for regulatory changes in remote assessments?

Institutions are mapping exams against jurisdictional rules, demanding transparency in vendor practices, and upgrading systems. Proctor365 supports these efforts with clear model cards, audit processes, and compliance-friendly features. - What role do advanced technologies play in modern remote proctoring?

Advanced technologies like biometrics, behavioral analytics, and secure-browser telemetry improve fraud detection and privacy. Proctor365 leverages these tools to deliver reliable, scalable, and privacy-first proctoring solutions.