Cloud Proctoring Software for Online Exam: Key Advantages

Remote assessment exploded during the pandemic, and demand never slowed. Universities, certification bodies, and corporations now run high-stakes tests around the clock. Consequently, they need tools that guard integrity without crushing budgets or IT teams. Cloud-based proctoring software for online exam answers that challenge. It combines elastic cloud resources with machine-learning video analytics and human review workflows. Moreover, recent market reports forecast the sector will grow beyond USD 8 billion by 2035. Those numbers illustrate how critical secure, scalable assessment has become for digital learning. However, decision makers still weigh privacy, bias, and infrastructure questions before signing contracts. This article unpacks the concrete advantages, backed by data from 2024-2026 industry research. Readers will also learn practical steps to deploy an ai proctor exam strategy responsibly.

Cloud Scale Explained

Cloud capacity scales in seconds, not semesters. Auto-scaling pools on AWS or Azure spin up GPUs whenever candidate volume surges. Therefore, institutions avoid exam-day meltdowns that plagued early, on-prem systems. In 2025, Honorlock demonstrated 40,000 concurrent sessions during California community college finals. Similarly, Proctorio’s multi-cloud architecture rerouted traffic during a regional outage, maintaining 99.9% uptime. These wins show why many teams choose proctoring software for online exam delivery. To compare, local servers often cap at a few hundred candidates and require large capital.

Cloud elasticity preserves candidate experience and institutional reputation. As we explore cost next, scale directly links to budget savings.

Let’s examine those savings now.

Cost Efficiency Wins

Budgets face pressure from expanding learner populations. Moreover, cloud economics convert hefty capital expenses into predictable operating fees. MarketResearchFuture notes average per-exam pricing dropped 25% after institutions switched to cloud AI. Pay-as-you-go also lets smaller departments pilot without multi-year commitments.

- On-prem server setup: ≈ USD 150,000 upfront (2024 campus IT survey).

- Cloud AI seat license: USD 3-7 per exam with hybrid review.

- Maintenance savings: 40% reduction in IT overtime hours.

Consequently, many CFOs rank proctoring software for online exam investments among their fastest ROI projects. Additionally, ai proctor exam automation trims human invigilation shifts, freeing staff for instructional tasks.

Cloud billing aligns cost with actual usage. That alignment fuels rapid adoption across sectors.

Yet savings matter only if features update quickly.

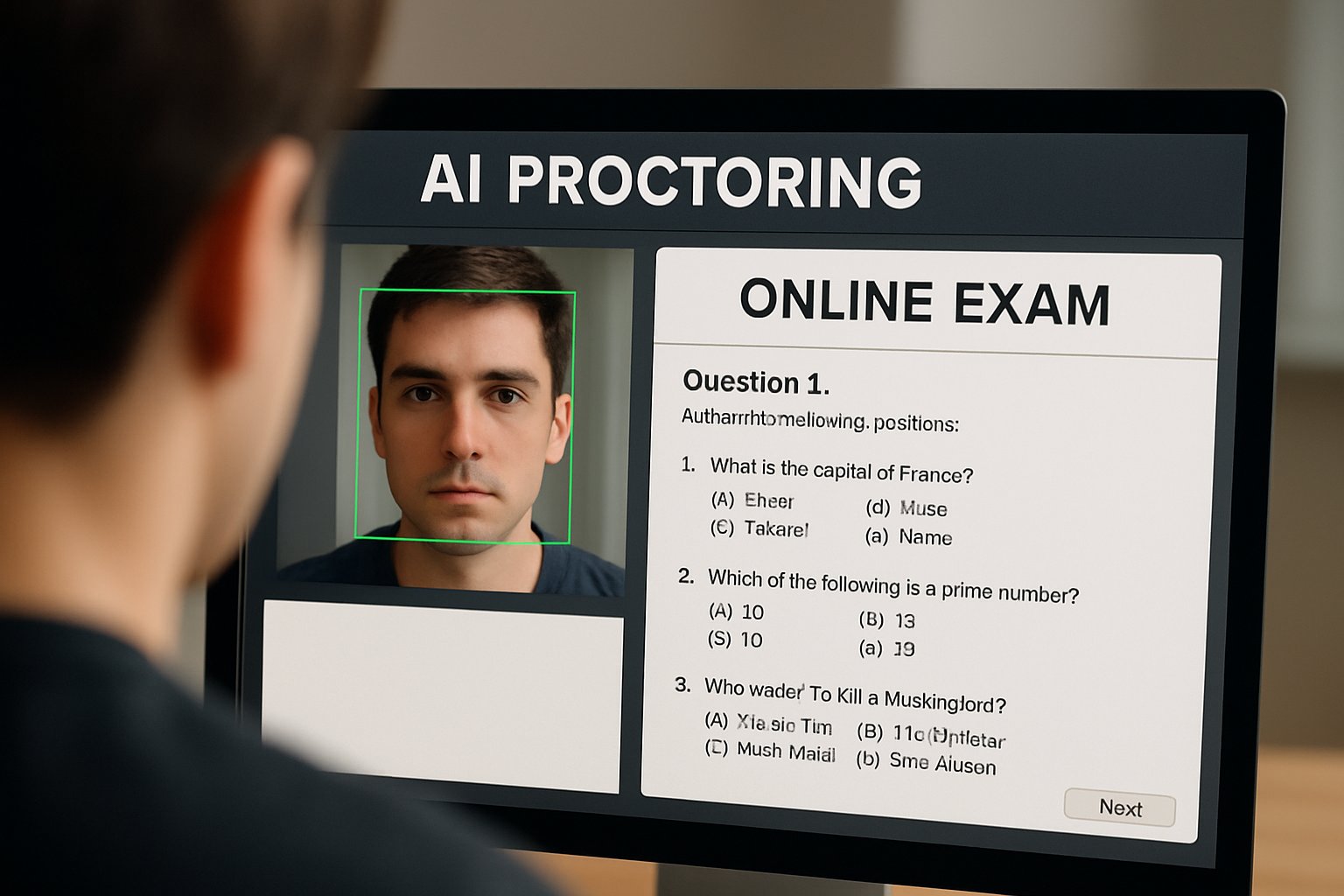

Proctoring Software Online Exam

Cheating tactics evolve with generative AI and voice clones. Therefore, vendors push weekly model updates across global clusters. Proctorio’s 2025 release notes highlighted new browser telemetry detectors rolled out overnight. Because updates happen centrally, campuses receive protection instantly without patching laptops. Similarly, Examity added voice-print analytics and pushed them live before spring exams began. Such speed keeps proctoring software for online exam deployments ahead of emerging threats. Importantly, change logs stay transparent, allowing IT teams to audit revisions.

Continuous delivery shrinks security windows exploited by cheaters. Instant updates support institutional credibility.

Next, seamless LMS connections simplify day-to-day workflows.

Seamless LMS Integration

Integration friction can derail even strong security tools. However, modern vendors ship IMS LTI links that drop into Canvas, Moodle, or Blackboard. Single sign-on passes student identity from the LMS into the ai proctor exam dashboard. As a result, faculty schedule sessions inside familiar grade-book screens. Likewise, consolidated dashboards display flags alongside quiz results for quick review. Proctoring software for online exam plugins also export analytics to institutional data lakes.

Smooth integration reduces training time and support tickets. Faculty adoption grows when tools feel invisible.

Global programs, however, need more than convenience; they demand compliance.

Global Compliance Reach

Data residency laws vary across regions. Consequently, multi-region clouds let providers store EU learner data within Frankfurt or Paris. PSI and Talview added Indian data centers after 2024 privacy amendments. Moreover, encryption at rest and SOC 2 reports reassure procurement committees. These guarantees help proctoring software for online exam contracts clear legal review faster. Still, privacy alone does not stop sophisticated fraud schemes.

Regional hosting answers legal questions early. Secure pipelines build stakeholder trust.

Robust analytics close the final gap.

Analytics Strengthen Security

Cloud aggregation anonymously pools millions of exam events. Therefore, pattern-mining models spot new collusion behaviors within hours. Vendors feed insights back into ai proctor exam risk scores. Dashboards show heat maps of session anomalies across entire programs. Administrators act quickly, armed with evidence ranked by severity. In turn, proctoring software for online exam users report lower false positives after tuning.

Mitigating New Exam Concerns

Despite benefits, privacy and bias still require vigilance. Academic studies reveal higher flag rates for darker skin tones. Consequently, institutions now demand hybrid human review and independent audits. Furthermore, many campuses provide alternative assessment paths for students without webcams.

Data analytics must pair with ethical safeguards. Balanced programs protect integrity and student dignity.

We can now wrap up the key lessons.

Cloud delivery, rapid updates, smooth integration, global compliance, and deep analytics make these systems compelling. However, institutions must balance efficiency with transparency, human oversight, and accessibility. When implemented responsibly, proctoring software for online exam deployments can raise trust across digital classrooms.

Why Proctor365? Proctor365 combines adaptive AI, advanced identity verification, and elastic monitoring to secure assessments worldwide. Moreover, our platform scales effortlessly from small cohorts to nationwide certification drives. Global exam bodies already rely on Proctor365 for reliable, unbiased oversight. Visit https://www.proctor365.ai/ today and discover how we elevate exam integrity for your organization.

Frequently Asked Questions

- How does cloud-based proctoring enhance exam security?

Cloud-based proctoring leverages elastic resources and machine-learning video analytics combined with human review. This scalable, cost-effective solution ensures exam integrity and protects against cheating during remote assessments. - How does Proctor365 improve global exam integrity?

Proctor365 uses adaptive AI, advanced identity verification, and elastic monitoring to prevent fraud. Its rapid updates and global compliance with data residency laws secure exam integrity worldwide. - What are the benefits of integrating proctoring software with LMS?

Integrating proctoring software with your LMS enables single sign-on, streamlined scheduling, and real-time analytics. This integration minimizes support tickets and enhances secure, user-friendly exam delivery. - How does AI proctoring support fraud prevention in online exams?

AI proctoring uses machine learning to detect anomalies and suspicious behaviors, pairing automated risk scoring with human oversight. This dual approach effective in preventing fraud and ensuring exam trustworthiness.