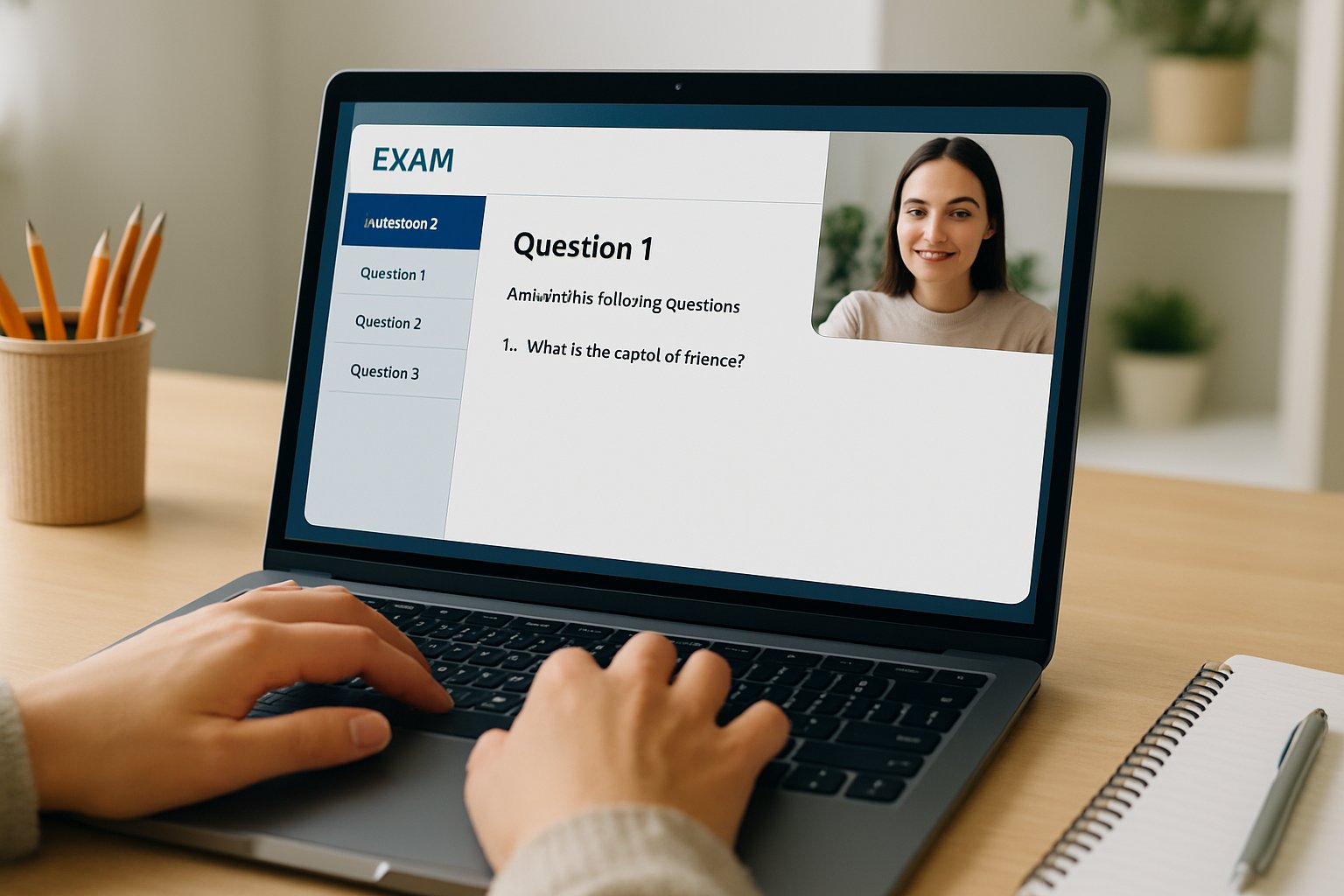

Academic integrity feels fragile in the generative-AI era. Consequently, universities now leverage the remotely proctored exam to secure online assessments. However, successful adoption demands more than flipping a switch. This guide walks administrators through a proven, low-risk rollout. Along the way, it highlights why a short pilot, conservative settings, and transparent student communication matter. Moreover, it explains how an ai proctored exam can coexist with privacy, accessibility, and legal duties.

Plan Remotely Proctored Exam

Begin with a clear rationale linked to learning outcomes. Subsequently, draft a one-page justification that explains why remote monitoring is necessary. Include vendor choice, risk analysis, and alternatives offered. The Wiley 2024 survey found 96% of instructors suspect cheating, so deterrence is credible.

Next, assemble stakeholders early. Invite faculty, disability services, legal counsel, and IT security. Their perspectives surface policy conflicts before launch.

Key takeaway: Cross-functional planning prevents last-minute blocks. Therefore, schedule the kickoff at least eight weeks before the remotely proctored exam date.

Verify Campus Policy Compliance

Check biometric, privacy, and data-retention rules first. Illinois BIPA and FERPA impose strict consent requirements. Moreover, many campuses now mandate student opt-out pathways.

Document the data lifecycle. State what is recorded, who reviews it, and when deletion occurs. Publish this notice inside the syllabus and LMS. Doing so builds trust and legal defensibility.

Key takeaway: Transparency reduces disputes. Consequently, policy clarity shields staff during misconduct hearings.

Configure Core Technical Settings

Create the quiz inside Canvas, Blackboard, or Moodle. Then enable the vendor LTI and select conservative presets. For a first ai proctored exam, use webcam, microphone, and screen capture only. Keep eye-tracking thresholds low, and disable room scans unless strictly required.

Avoid aggressive lockdown browser modes on day one. Instead, pilot “soft” restrictions that block copy-paste but allow multiple monitors if needed for accessibility. Additionally, require human confirmation for any automated high-severity flag.

Key takeaway: Conservative settings cut false positives. Therefore, technical simplicity fosters student confidence in the remotely proctored exam.

Prepare Student Readiness Guide

Students succeed when surprises disappear. Publish a readiness page at least one week before testing. Include hardware specs, installation links, and troubleshooting contacts. Provide a mandatory practice quiz that mirrors live settings.

Furthermore, detail accommodation procedures. Offer in-person or alternative assessments for learners without private spaces or adequate internet. EDUCAUSE stresses this equity step.

- Checklist: webcam, microphone, stable bandwidth, photo ID.

- Support: live chat link, phone hotline, email queue.

Key takeaway: Early practice reduces panic. Subsequently, help tickets drop on exam day.

Run Pilot And Review

Launch a pilot with 10–50 volunteers two weeks ahead. Collect flag logs, bandwidth failures, and feedback. Research shows automated indicators misfire under poor lighting, so empirical data guides threshold tuning.

Implement Human Review Workflow

Assign trained reviewers to inspect every medium or severe alert within 24 hours. Never penalize based solely on the algorithm. This safeguard satisfies civil-liberties guidance and boosts student fairness.

Key takeaway: Pilots surface technical gaps. Therefore, iterative tuning protects exam integrity for the main remotely proctored exam.

Iterate After First Session

Post-exam analytics matter. Compare flag counts to confirmed incidents, student complaints, and completion rates. Moreover, track device failures and accessibility issues.

Measure Flag Accuracy Rates

Calculate false positive percentages. If rates exceed 5%, lower sensitivity or adjust lighting guidelines. Repeat the cycle for the next ai proctored exam.

- Adjust settings in vendor dashboard.

- Update student guidance accordingly.

- Report findings to stakeholders.

Key takeaway: Data-driven tweaks sustain trust. Consequently, each successive remotely proctored exam runs smoother.

Frequently Asked Questions

- How does Proctor365’s AI proctoring technology prevent cheating during remotely proctored exams?

Proctor365’s AI proctoring uses real-time monitoring, screen capture, and identity verification to detect suspicious behavior. This robust system enhances exam integrity and fraud prevention while reassuring stakeholders about exam security. - Why is a pilot phase important in rolling out a remotely proctored exam?

A pilot phase helps identify technical issues, calibrate AI thresholds, and gather feedback. This early testing ensures settings are optimized, reducing false positives and building trusted, seamless exam experiences. - How does Proctor365 ensure accessibility and compliance during exams?

Proctor365 aligns with legal and educational standards by incorporating privacy safeguards, clear student communication, and alternative assessment options, ensuring accommodation for accessibility and adherence to data retention and consent policies. - What technical settings does Proctor365 recommend for initial AI proctored exams?

For initial exams, Proctor365 advises conservative configurations including webcam, microphone, and screen capture. Settings are optimized to balance security with accessibility, reducing aggressive lockdown controls to minimize false flags and technical issues.