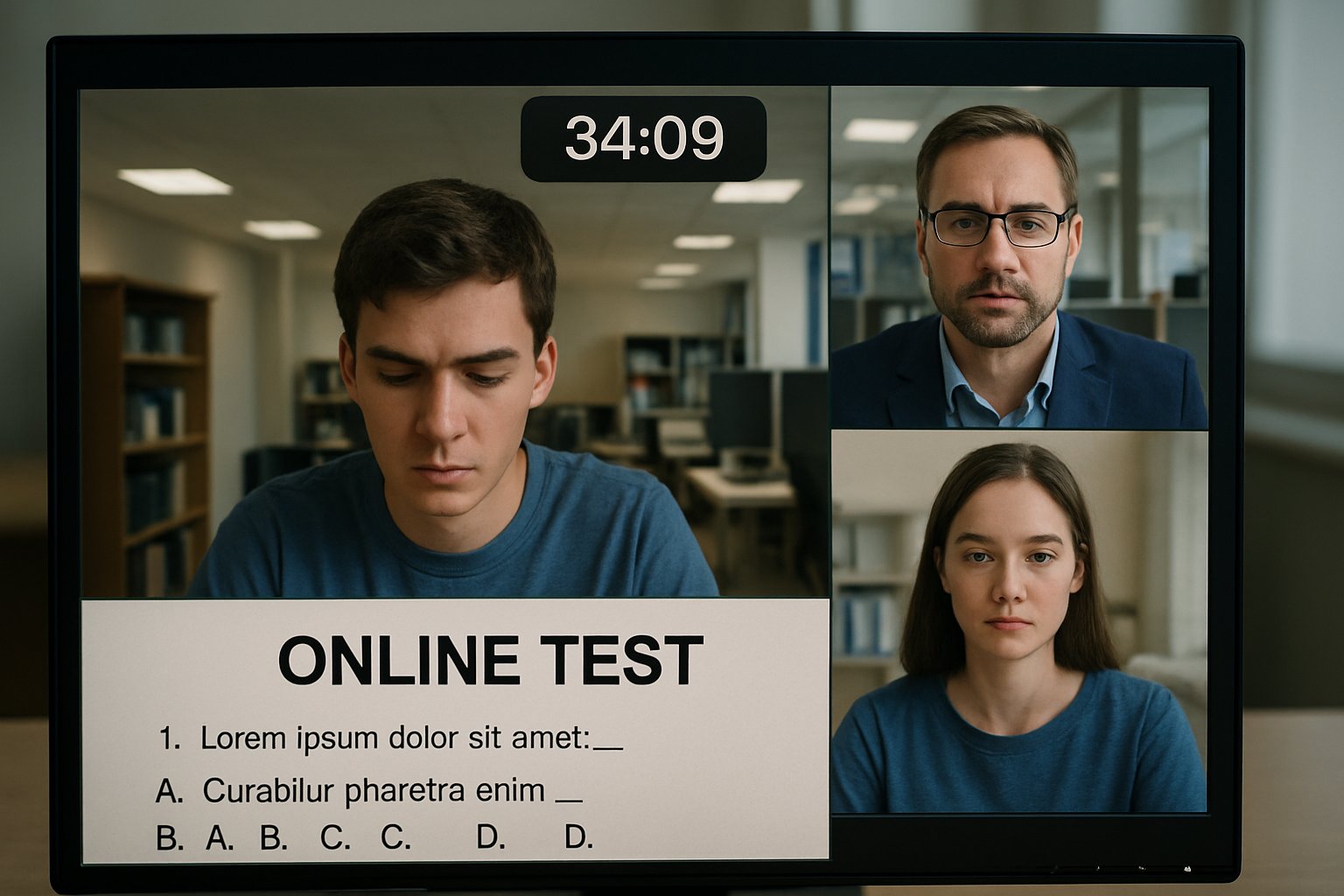

Universities now grapple with a clear question: does online test monitoring change how students score during high-stakes assessments? Administrators must balance integrity, privacy, and equity while the research base evolves rapidly. Updated evidence offers guidance yet still sparks debate.

Recent studies paint a mixed picture. A large PNAS study found strong score correlations across formats. Conversely, a randomized field experiment showed webcam proctoring cut average grades by one-third of a standard deviation.

Meanwhile, legal scrutiny and student anxiety complicate adoption decisions. Nevertheless, global demand rises as institutions pursue scalable digital assessment. Evidence about online test monitoring remains nuanced.

For stakeholders, the stakes extend beyond grades to credential value and institutional reputation. Moreover, national regulators increasingly demand proof that digital assessments deter misconduct without harming equity. This article reviews the latest evidence and offers actionable guidance for decision-makers. Throughout, we highlight practical lessons for any scale, from a single remotely proctored exam to national licensing.

Current Evidence Landscape Review

Chan and colleagues analyzed 2,010 students across 18 courses. They compared in-person scores with unproctored exams, absent online test monitoring. Correlations remained substantial, implying limited widespread cheating.

These findings echo licensure data covering 14,097 professional candidates. Scores and pass rates stayed similar across test-center and live remote formats. Consequently, many experts call such parity reassuring.

- PNAS within-student correlation: high across 18 courses.

- Licensure mean effect size: Hedges g 0.19.

- Global market: USD 0.6-1.2B mid-2020s.

Overall, large observational studies suggest format alone need not shift achievement. Next, we inspect randomized evidence for deeper causal insight.

Randomized Trial Score Insights

A Spanish field experiment randomly assigned 412 students to webcam-on or webcam-off conditions. Students under active monitoring scored around 0.25 standard deviations lower. Researchers attribute the gap to blocked cheating rather than test anxiety.

Importantly, the researchers labelled the webcam stream a form of online test monitoring. Thus, the causal link strengthens integrity claims.

Randomized data shows monitoring can deter dishonest boosts. However, results may differ in another remotely proctored exam setting.

Large Cohort Score Trends

Professional licensure testing offers another lens. In 14,097 cases, live remote proctoring mirrored test-center performance. Average differences favored remote candidates by only 0.19 effect size.

Similarly, many course-level comparisons during the pandemic found parallel grade distributions. These were sometimes minimally proctored, sometimes a fully remotely proctored exam. Yet score integrity largely persisted.

Large cohorts reveal stability across modalities. Consequently, context matters more than technology alone for online test monitoring outcomes.

Stress And Equity Concerns

Despite integrity gains, student wellbeing remains a pressing issue. Survey work shows some learners fear false flags and intrusive webcam demands. International Journal for Educational Integrity reported heightened distress during a remotely proctored exam.

Privacy groups cite bias in facial detection and unconstitutional room scans. Moreover, unequal internet access can magnify disadvantage.

Equity gaps could erode trust if ignored. Therefore, institutions must align online test monitoring with humane, transparent safeguards.

Practical Implementation Guidance Steps

Stakeholders can mitigate risks through deliberate design.

- Choose an online test monitoring mode matching exam stakes and learner needs.

- Provide clear consent forms detailing data practices.

- Offer alternative testing for students lacking private spaces.

- Review AI flags quickly to avoid unfair delays.

- Continuously audit accuracy during each remotely proctored exam cycle.

Additionally, publish summary statistics on breaches and resolutions. Such openness reinforces confidence in online test monitoring policies.

Good governance turns surveillance into assurance. Next, let us examine the expanding commercial landscape.

Industry Market Growth Outlook

Market research foresees double-digit growth for proctoring services through 2031. Insight Partners projects revenues reaching USD 2.34 billion with 15.5% CAGR. Drivers include flexible scheduling, cost savings, and rising acceptance of online test monitoring worldwide.

Vendors now tout AI that detects AI-generated answers. However, independent audits remain scarce.

Commercial momentum ensures rapid feature rollout. Consequently, buyers must demand verifiable evidence before adopting another proctored platform.

Legal And Policy Shifts

Courts increasingly scrutinize proctoring demands. In Ogletree v. Cleveland State, a judge ruled mandatory room scans unconstitutional. Meanwhile, universities shift vendors to reduce legal exposure.

Advocacy groups urge minimal intrusion and strong disability accommodations. Therefore, policy clarity should precede technology purchases.

Regulators will keep shaping acceptable surveillance limits. Thus, compliance teams must watch new rulings before scaling any remotely proctored exam initiative.

Conclusion And Next Steps

Research shows no single verdict on score impact. Observational studies suggest stability, while randomized studies reveal cheating deterrence and occasional stress declines. Equity, privacy, and legal challenges remain central. Accordingly, thoughtful design, transparent policy, and continuous evaluation are essential. Market trends indicate adoption will only accelerate.

Proctor365 meets these needs with AI-powered identity checks and scalable online test monitoring. Our platform verifies test-takers instantly, flags risks in real time, and protects data with robust encryption. Global universities and certification bodies already trust our secure, remotely proctored exam solution. Furthermore, customizable flag thresholds reduce false positives and administrative load. Discover how we elevate exam integrity at Proctor365.ai today.

Frequently Asked Questions

- How does online test monitoring impact student scores on high-stakes exams?

Research shows remote proctoring causes only minor score shifts, deterring dishonest behavior while sustaining overall performance. This balance ensures exam integrity without drastically influencing student results. - What are the key benefits of AI-powered proctoring systems?

AI-powered proctoring, such as Proctor365, offers real-time identity verification, fraud prevention, and efficient flagging of suspicious behavior, enhancing exam integrity and ensuring secure, scalable assessment practices. - How do modern proctoring solutions address privacy and equity concerns?

Advanced online proctoring integrates transparent data practices, alternative testing options, and bias mitigation strategies. This approach protects privacy while ensuring equity, aligning with legal standards and student well-being. - Why is continuous evaluation important in remote proctoring?

Regular audits and swift reviews of AI flags improve monitoring accuracy and fairness. Continuous evaluation helps proctoring systems adapt to evolving research, compliance demands, and security enhancements.