Remote testing exploded during the pandemic. Consequently, many institutions rushed to deploy AI proctoring to curb cheating. However, students soon argued that webcams and room scans felt intrusive. Meanwhile, regulators began scrutinizing data practices. This article examines why discomfort persists and how thoughtful user-experience decisions can help AI proctoring feel less invasive without sacrificing integrity.

Proctoring Debate Intensifies Worldwide

AI proctoring adoption peaked in 2021 when 63 % of North American college sites mentioned such tools. Nevertheless, several campuses now report steep declines. At CU Boulder, only 6 % of instructors used Proctorio during 2023–24. Moreover, high-profile court cases and privacy rulings keep pressure on universities.

Key recent developments include:

- Ontario’s privacy commissioner ordered tighter limits on Respondus recordings in 2024.

- Ogletree v. Cleveland State ruled mandatory room scans unconstitutional.

- EFF lawsuits alleged vendor intimidation of critics.

These events reveal shifting expectations. Institutions crave scalable monitoring; regulators insist on restraint. The tension sets the stage for stronger design solutions. Therefore, understanding the evolving legal climate is essential before tackling UX fixes.

Growing oversight highlights urgency. Meanwhile, policy shifts directly influence product requirements for the next generation of AI proctoring platforms.

Regulators Demand Privacy Controls

Legal opinions now emphasize proportionality. For example, Ontario’s report criticized McMaster University for vague notices and broad data reuse. Similarly, U.S. courts liken forced room scans to warrantless searches. Consequently, universities must demonstrate necessity, minimize collection, and offer alternatives.

Regulators consistently ask for five safeguards:

1. Clear consent flows

2. Narrow data scopes

3. Short retention windows

4. Human review of flags

5. Accessible appeal processes

Ignoring these demands can invite lawsuits or enrollment pushback. Nevertheless, compliance alone will not rebuild student trust. Institutions must translate legal language into transparent, supportive interfaces.

Robust governance sets boundaries. However, real acceptance hinges on addressing emotional and cultural concerns detailed in the next section.

Student Concerns Undermine Trust

Empirical studies show 70–90 % of surveyed learners feel anxious about webcams. AI proctoring also raises equity issues because low-income students lack private spaces or reliable bandwidth. Furthermore, false positives disproportionately hurt neurodiverse or disabled testers.

Common student objections include:

- “My home is my sanctuary; cameras invade it.”

- “Algorithms may misinterpret my tics as cheating.”

- “I cannot control roommates entering unexpectedly.”

Moreover, mystery around data storage fuels suspicion. Many candidates assume recordings live forever in vendor clouds. In contrast, transparent deletion policies markedly improve comfort levels.

These insights confirm that emotional safety equals academic performance. Consequently, UX teams must design for empathy as well as compliance.

Understanding learner fears clarifies priorities. Subsequently, the article turns to specific design levers that mitigate those fears.

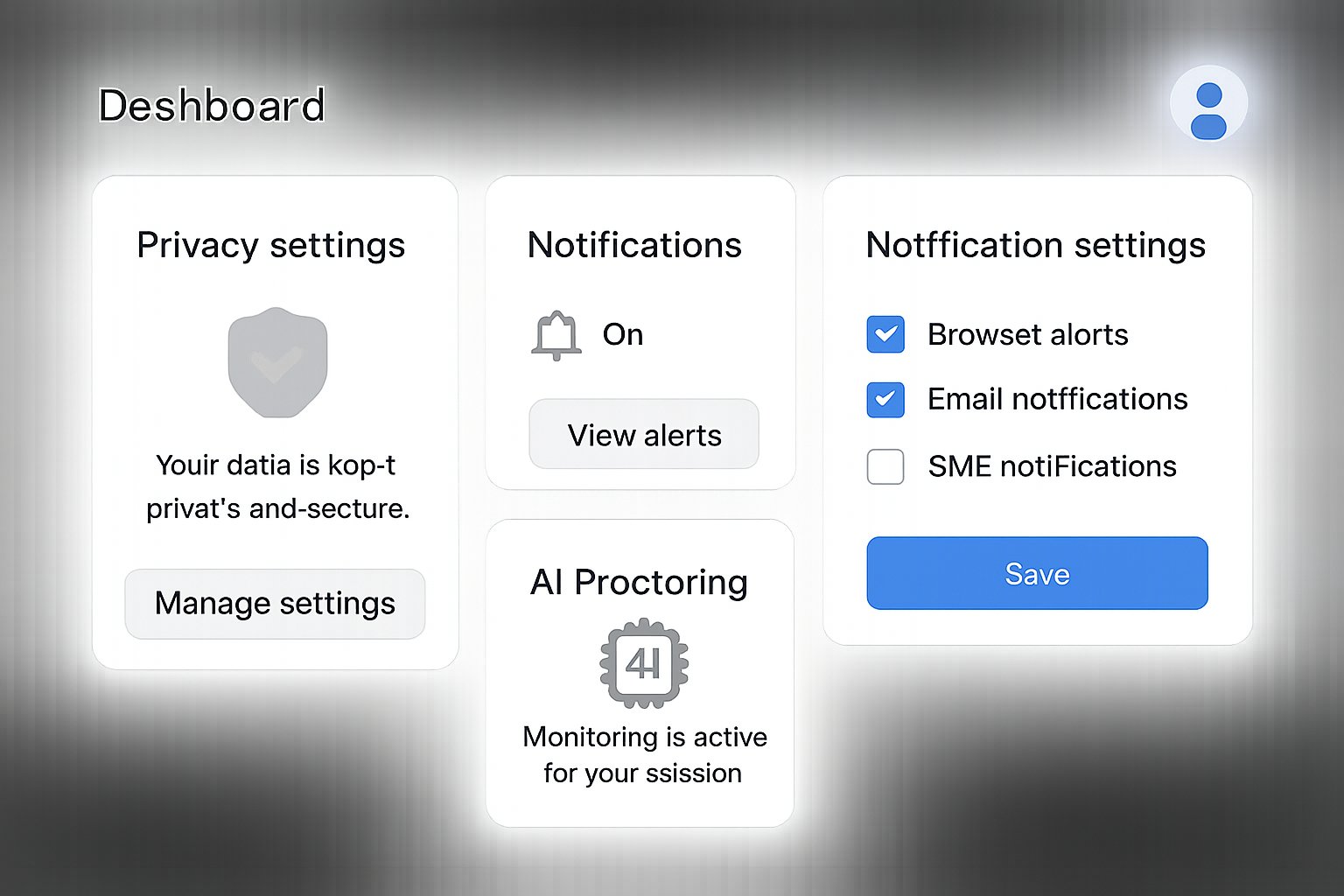

UX Levers Reduce Intrusion

Minimize Data Collection

Edge inference keeps raw video on the device and transmits only short clips when a flag triggers. Additionally, default settings should disable microphone capture unless an instructor explicitly requires audio.

Explainable Flag Feedback

A calm, sidebar indicator can show, “Face not detected, please adjust camera.” Consequently, students stay informed and can self-correct before flags escalate.

Human-In-The-Loop Review

AI proctoring should alert trained reviewers, not automatically fail exams. Therefore, final judgments gain context and fairness.

Offer Authentic Alternatives

Open-book exams, randomized problem banks, or project submissions often remove surveillance needs entirely. Nevertheless, where high-stakes identities matter, in-person centers or live human proctors should remain available.

Thoughtful UX choices transform perceptions. Therefore, the next checklist ties these concepts to operational steps.

Implementation Checklist For Teams

Product managers and academic technologists can follow this staged roadmap:

- Run necessity and equity assessments before deploying AI proctoring.

- Configure local inference and 14-day deletion by default.

- Build a sandbox practice exam that mirrors real monitoring.

- Draft plain-language consent screens citing purpose, access, and retention.

- Ensure every flag funnels to a human reviewer within 24 hours.

- Publish appeal procedures in course syllabi.

- Create accommodations such as campus labs or oral assessments.

Each item aligns with regulator guidance and student feedback. Consequently, institutions can reduce risk while enhancing user confidence.

Structured processes accelerate adoption. Meanwhile, strategic foresight prepares teams for future regulatory shifts.

Future Outlook And Recommendations

Vendor roadmaps increasingly tout privacy-by-design. Moreover, academics predict remote assessment will persist for continuing education and global programs. Therefore, competition will favor platforms that combine rigorous security with respectful UX.

Key trends to monitor include biometric legislation expansions, cross-border data flow restrictions, and AI transparency mandates. Consequently, institutions should negotiate vendor contracts with deletion triggers and audit rights.

Adopting flexible assessment models also future-proofs pedagogy. In contrast, clinging to surveillance may deter enrollment in privacy-sensitive regions.

Preparing for emerging norms ensures resilience. Subsequently, the conclusion distills the article’s main insights.

Conclusion And Next Steps

AI proctoring remains valuable for scale and deterrence. However, unchecked surveillance erodes trust. Regulators now demand proportionate monitoring, while students expect respect and choice. By minimizing data, explaining flags, integrating human reviewers, and offering authentic alternatives, universities can safeguard integrity without invading privacy.

Industry professionals should audit current workflows, pilot edge-based solutions, and revise policy language this semester. Furthermore, continuous user research will reveal evolving expectations. Take action now to transform remote testing from a legal headache into a distinguished service advantage.

Frequently Asked Questions

- What factors drove the rapid adoption of AI proctoring during the pandemic?

The surge in remote testing to maintain academic integrity led institutions to quickly deploy AI proctoring, despite concerns over privacy, as they sought scalable solutions to prevent cheating. - How have privacy and regulatory concerns impacted AI proctoring practices?

Heightened regulatory scrutiny, court rulings, and privacy commissioner actions have forced institutions to re-evaluate intrusive practices like room scans, demanding clearer consent and minimized data collection. - What common concerns do students have about AI proctoring?

Students express anxiety over constant surveillance, feel that room scans invade personal space, and worry about false positives—especially for neurodiverse or disabled individuals—impacting their academic performance. - How do UX improvements help reduce the intrusiveness of AI proctoring?

Implementing UX strategies such as edge inference, explicit flag feedback, and human review reduces data collection and enhances transparency, thereby mitigating the intrusive nature of automated proctoring. - Which regulatory safeguards are critical for fair AI proctoring?

Key safeguards include clear consent flows, narrowly scoped data collection, short retention times, human review of flags, and accessible appeal processes to ensure both compliance and respect for student privacy. - How can institutions provide viable alternatives to conventional AI proctoring?

Institutions can offer alternatives such as open-book exams, randomized problem banks, in-person proctoring, or dedicated testing labs, helping address ethical concerns and reduce reliance on constant surveillance. - What future trends might influence the development of AI proctoring platforms?

Emerging biometric legislation, cross-border data flow restrictions, and AI transparency mandates are likely to drive vendors toward privacy-by-design and more user-centered approaches in remote testing environments.